The RAG approach requires document ingestion so that embeddings can be created to enable LLM-based search.

By integrating RAG into Amazon Lex, we can provide accurate and comprehensive answers to user queries, resulting in a more engaging and satisfying user experience.

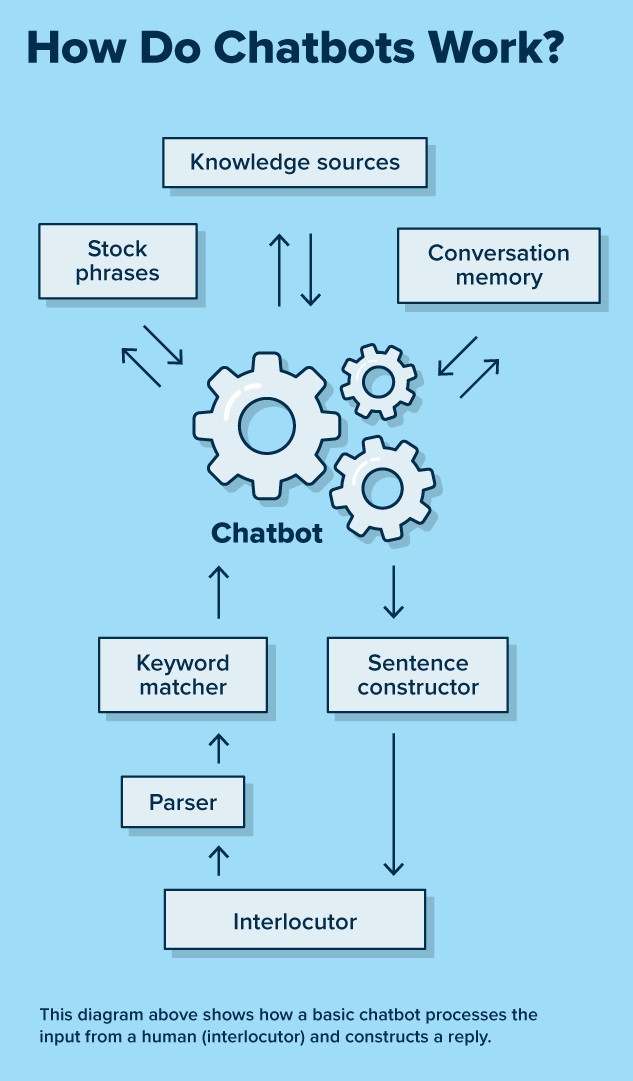

It then employs a language model to generate a response by considering both the retrieved documents and the original query. RAG starts with an initial retrieval step to retrieve relevant documents from a collection based on the user’s query. It also takes into account the semantic context from stored documents more effectively and efficiently. This methodology harnesses the power of large language models, such as Amazon Titan or open-source models (for example, Falcon), to perform generative tasks in retrieval systems. RAG combines the strengths of traditional retrieval-based and generative AI based approaches to Q&A systems. We use the Zappos customer support use case as an example to demonstrate the effectiveness of this solution, which takes the user through an enhanced FAQ experience (with LLM), rather than directing them to fallback (default, without LLM). In this solution, we showcase the practical application of an Amazon Lex chatbot with LLM-based RAG enhancement. They can also use enterprise search services such as Amazon Kendra, which is natively integrated with Amazon Lex. As a bot developer gains confidence with using a LlamaIndex to explore LLM integration, they can scale the Amazon Lex capability further. In addition, we will also demonstrate Amazon Lex integration with LlamaIndex, which is an open-source data framework that provides knowledge source and format flexibility to the bot developer. We will review how the RAG approach augments Amazon Lex FAQ responses using your company data sources. This blog post introduces a powerful solution for augmenting Amazon Lex with LLM-based FAQ features using the Retrieval Augmented Generation (RAG). They do so by leveraging enterprise knowledge base(s) and delivering more accurate and contextual responses. One of the benefits offered by LLMs is the ability to create relevant and compelling conversational self-service experiences. These methods are effective, but require developer resources making getting started difficult. Second, developers can also integrate bots with search solutions, which can index documents stored across a wide range of repositories and find the most relevant document to answer their customer’s question. First, by creating intents, sample utterances, and responses, thereby covering all anticipated user questions within an Amazon Lex bot. Today, a bot developer can improve self-service experiences without utilizing LLMs in a couple of ways. When leveraged effectively, enterprise knowledge bases can be used to deliver tailored self-service and assisted-service experiences, by delivering information that helps customers solve problems independently and/or augmenting an agent’s knowledge. One of the challenges enterprises face is to incorporate their business knowledge into LLMs to deliver accurate and relevant responses. We announced Amazon Bedrock recently, which democratizes Foundational Model access for developers to easily build and scale generative AI-based applications, using familiar AWS tools and capabilities. Today, large language models (LLMs) are transforming the way developers and enterprises solve historically complex challenges related to natural language understanding (NLU).

Amazon Lex is a service that allows you to quickly and easily build conversational bots (“chatbots”), virtual agents, and interactive voice response (IVR) systems for applications such as Amazon Connect.Īrtificial intelligence (AI) and machine learning (ML) have been a focus for Amazon for over 20 years, and many of the capabilities that customers use with Amazon are driven by ML.

0 Comments

Leave a Reply. |

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed